China Is Building the Administrative Operating System for Autonomous AI

Beijing’s new intelligent agent policy is not a narrow AI rulebook. It is a blueprint for a governed machine society, where autonomous software actors have identities, permissions, registries, standards, audit trails and recall mechanisms.

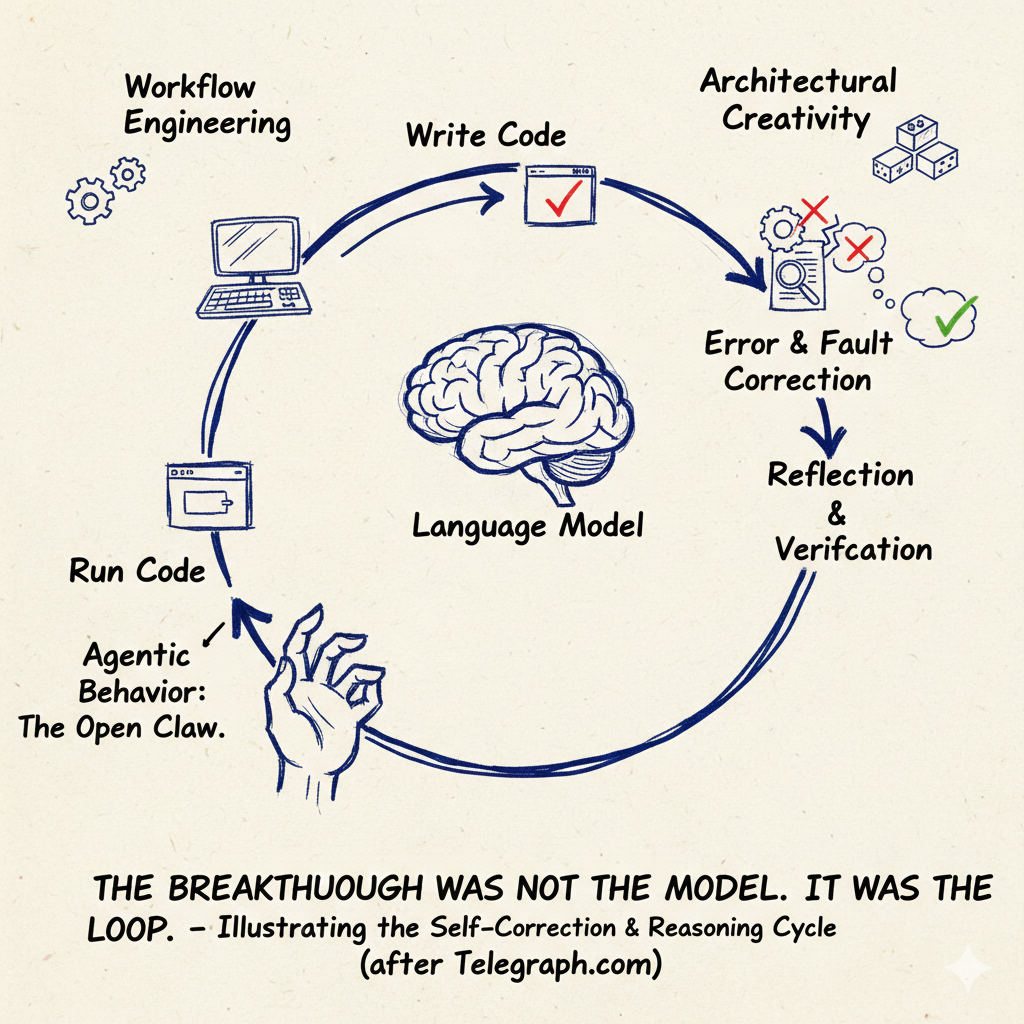

The West is still arguing about chatbots. China is preparing for agents as infrastructure. Once AI systems can act, buy, schedule, operate tools, move through networks, call other agents, touch factories, courts, hospitals, banks and media systems, the decisive question is no longer model performance. It is administrative control. Who is the agent? Who authorised it? What can it access? Where is it registered? What standard does it speak? Who certifies it? Who recalls it when it fails?

China’s latest policy on intelligent agents should not be read as another bureaucratic note about artificial intelligence. It is more important than that. It is an early map of how a state intends to govern autonomous software once software stops merely answering questions and begins carrying out tasks.

The document, issued on May 8, 2026 by the Cyberspace Administration of China, together with other central departments, defines intelligent agents as systems with autonomous perception, memory, decision making, interaction and execution capabilities. That definition matters. A chatbot talks. An agent acts. A chatbot can mislead. An agent can transact, route, recommend, decide, invoke tools, call other agents and touch real systems.

That is why the Chinese document is not simply about safety. It is about the administrative layer that must sit above machine autonomy. Beijing is designing the operating rules for a future in which millions of software actors move through digital and physical systems with varying degrees of independence.

The key shift

The old internet organised users, websites, servers, platforms and apps. The next internet will also need to organise agents. That means identity, discovery, permission, audit, certification, liability and revocation. China’s policy recognises that the real chokepoint is not only the model. It is the administrative system around the model.

The most revealing phrase in the document is the call to develop an “intelligent internet.” That phrase is not decorative. It points to a network in which intelligent agents can be registered, discovered, authenticated and connected. The policy calls for research into an intelligent agent registration platform, digital identity management, retrieval and discovery, capability declaration, compliant payment, security protection and conflict resolution.

This is where the policy becomes strategically important. Beijing is not merely asking how to stop harmful outputs. It is asking how autonomous agents should be made visible to the system. Visibility is power. A registered agent can be inspected. An identified agent can be authorised. A certified agent can be trusted. A non compliant agent can be blocked, recalled or punished through the platform that permits it to operate.

The language of the document is cautious, but the architecture is ambitious. It covers fundamental model research, specialised industry models, high quality datasets, long term memory, tool use, multi agent collaboration and agent interoperability. It calls for toolchains for research, testing, deployment, operation and maintenance. It calls for security tools to detect adversarial examples and behavioural anomalies. It calls for the ability to detect, intervene, block and recover from non compliant agent behaviour.

That is not the vocabulary of a consumer technology market. It is the vocabulary of a regulated machine ecology.

The document’s standardisation section is the heart of the matter. China wants standards for key technologies, products, data exchange, application scenarios, quality evaluation, security assurance and trusted authentication. It specifically refers to standards for intelligent agent interconnection, including AIP, the Intelligent Agent Interconnection Protocol.

A protocol is not a slogan. Whoever shapes protocol shapes conduct. Protocols decide how agents describe themselves, how they are discovered, how they authenticate, how they invoke tools, how they exchange data and how they interact with other agents. In ordinary political language, this sounds dry. In infrastructure terms, it is decisive.

Why AIP matters

Agent interconnection standards are the roads, passports and customs posts of the agent economy. They determine who can enter, what credentials must be shown, what powers can be used, what tools can be called, and what record is left behind.

This is the difference between a market of clever tools and a governed layer of infrastructure. Western debate still often treats AI agents as products sold by firms. China is treating them as actors inside a national system. The agent has a developer. It has a deployment method. It has an interface protocol. It has a capability statement. It may have a certification status. It may be subject to registration, testing and recall.

The recall language is especially revealing. Cars are recalled when they are unsafe. Medicines are withdrawn when they are dangerous. Now China is contemplating problematic intelligent agents as products that can be taken out of circulation. That presumes an authority capable of identifying the product, locating its deployment, assessing its risk and enforcing removal.

The document is also explicit about decision authority. It distinguishes between decisions limited to the user, decisions requiring user authorisation and autonomous decisions by the agent. Users are to retain the right to know and final decision making power. Agents are not to exceed the authority granted to them.

That is the administrative question at the centre of autonomous AI: delegation. Human beings will not manually approve every microscopic action an agent takes. But if agents act without limits, they become unbounded proxies. If they inherit the user’s full permissions, they become a security and legal nightmare. If they act under their own identity, then the system must know who the agent is, who delegated power to it, what it was allowed to do, and whether it stayed within that boundary.

This is why agent identity is becoming a global technical problem, not only a Chinese political concern. Recent technical work at the standards layer has focused on exactly the same pressure point: agents that can call tools, interact with services, discover one another and act across systems need verifiable identity, scoped authority and audit trails. The difference is that China is folding that technical problem into state policy earlier and more explicitly.

The document’s safety provisions are broad. They include data security, personal information protection, password protection, attack detection, access control, behaviour control, data poisoning, privacy leaks, algorithm tampering, system vulnerabilities and operational instability. It also warns against automated attacks, privacy violations, false information, online fraud and other criminal uses.

None of this is surprising. The more important point is how safety is operationalised. The answer is not merely content moderation. It is supply chain security, lifecycle management, platform rules, third party evaluation, certification, information reporting, compliance self testing, distribution platform management and industry self regulation. In other words, China is building a control system that reaches from model development to agent deployment to platform distribution to user interaction.

The governance ladder

Low risk office and entertainment agents may be governed through self testing, reporting and platform management. Sensitive sectors may face registration, testing and recall. The document therefore does not imagine one flat rule for all agents. It imagines tiered control according to sector, risk and social impact.

The scope of application is vast. The policy lists scientific research, software development, manufacturing, energy, transport, agriculture, finance, terminal devices, cultural tourism, commercial services, education, healthcare, human resources, government services, judicial services, public safety, urban governance, bidding and tendering.

This breadth is not accidental. It shows that China sees intelligent agents as a general purpose administrative and industrial technology. In manufacturing, agents are to optimise production scheduling, resource allocation, process parameters, product defect identification and integration with industrial robots. In energy, they are to assist with power dispatching, grid maintenance, environmental sensing and mineral exploration. In transport, they are to support safety supervision, emergency command and traffic dispatch. In finance, they are to assist with credit approval, transaction monitoring, fraud interception and anti money laundering.

That is the industrial side of the operating system. The state wants agents embedded in the productive base.

But the political side is just as important. The document speaks of government services, judicial services, public safety and urban governance. Agents are to assist in approval processes, policy consultation, case material organisation, evidence review, legal document generation, safety monitoring, emergency dispatch, threat warning and infrastructure management.

The state is not merely regulating the agent economy from outside. It intends to use agents inside the state itself.

The most politically sensitive passage concerns information services. The document encourages information publishing departments and content dissemination platforms to develop agents for user analysis, topic planning, news gathering and editing, distribution and recommendation, intelligent review, public opinion guidance, emotional regulation and real time translation.

This is the sentence that should make Western readers sit up. “Public opinion guidance” and “emotional regulation” are not neutral newsroom productivity functions. They show how agentic systems can become instruments for managing information flows, sentiment and political attention. In China, this fits openly within the governing model. In the West, similar systems may emerge through platforms, advertisers, security agencies and political consultancies, but under more fragmented and less honest names.

The media implication

The same agent that can plan topics, recommend content and translate text can also review, rank, suppress, redirect and emotionally tune information. The policy says this plainly. The question is not whether AI will enter media systems. It already has. The question is who controls the agent layer.

There is a temptation in the West to dismiss Chinese documents like this as propaganda or bureaucratic padding. That would be a mistake. The language is formulaic, but the structure is not empty. It identifies the real pressure points of agentic AI: identity, authority, interconnection, standards, testing, certification, platform governance, supply chain security and recall.

Those are the points at which power will settle.

The West has powerful model companies. China is trying to build the control architecture around the model. The model is only one layer. Above it sit tools, permissions, identities, registries, protocols, audits and sector rules. Below it sit chips, cloud, data centres, energy, devices and industrial systems. A serious AI strategy has to govern the full stack.

This is why the document should be read as industrial policy, internet policy, security policy and state capacity policy at the same time. It is about creating an ecosystem in which Chinese firms, universities, open source communities, terminal makers and state agencies can build and deploy agents within a common rule structure.

The open source section is also important. China wants domestic open source communities to develop agent frameworks, interfaces and toolchains, and to promote compatibility with open source chips, operating systems and large models. This is not just about innovation. It is about reducing dependency on foreign technical chokepoints. If the agent layer becomes the next internet layer, then relying on foreign standards, foreign identity systems and foreign tool protocols would be strategically dangerous.

The policy also calls for application stores, supply and demand platforms, pilot projects, open bidding, key scenario opening and international compliance. That means agents are to be commercialised, standardised and exported. China does not only want a domestic agent system. It wants Chinese agents and Chinese agent standards to travel abroad, adapted to local laws and customs where necessary.

Here lies the larger contest. The future of AI will not be decided only by whose model scores higher on a benchmark. It will also be decided by whose protocols become normal, whose identity systems are trusted, whose compliance tools are adopted, whose app stores distribute agents, whose certification becomes necessary and whose standards become embedded in industry.

That is why the phrase “administrative operating system” is the right one. China is not simply building intelligent agents. It is building the administrative grammar through which agents become permissible actors.

The model answers the question. The agent performs the task. The administrative operating system decides whether the agent exists, what it is called, what it may do, whom it represents, which tools it can invoke, how it pays, how it is audited, how it is stopped and who is blamed when it causes harm.

The West should read this document carefully. Not because China’s answer is necessarily desirable. It is plainly compatible with a more centralised and politically managed information order. But because it recognises the problem earlier than much of the Western debate. Once machines can act, sovereignty moves to the layer that governs action.

That is the real lesson of Beijing’s intelligent agent policy. The chatbot age is ending. The age of registered, authorised, interconnected and monitored machine actors is beginning. China wants to write the rulebook before the rest of the world has agreed that there is a rulebook to write.

Sources and report

The central source is the May 8, 2026 implementation opinion on the standardised application and innovative development of intelligent agents, issued through Chinese official channels and reported by the State Council portal and Chinese state media.

- State Council of China report on intelligent agent policy

- Cyberspace Administration of China interpretation on intelligent agents and the intelligent internet

- OpenAtom AIP intelligent agent interconnection protocol materials

- China national standards platform entry on agent interconnection general architecture

- IETF draft on Agent Collaboration Protocols for the Internet of Agents

- IETF draft on Agent Identity Protocol

You might also like to read on Telegraph.com

China, AI and technological sovereignty

- China’s AI Governance Model vs America’s Frontier Race: Why the Real Battle Is Over Who Can Control Intelligence at Scale

- China Is Not Trying to Beat Western AI. It Is Trying to Replace the Interface

- America Is Fighting an AI Race That China Is Not Running

- China’s Open AI Models Could Puncture the Artificial Intelligence Bubble

- Beijing Writes the AI Rules While Washington Writes Press Releases

- China’s Nvidia Ban Is Pushing Alibaba, ByteDance and DeepSeek Offshore for AI Training

- China Turns Trump’s Nvidia H200 Deal Into Another Tool for Self Reliance

- What Western Headlines Get Wrong About China’s “Bypass” of Chip Export Controls

- Born as a Weapon in the New Cold War, America’s Chip Blockade Has Become the Forge of China’s Self Reliance

China, rules and the new Belt and Road

- China Is Rebuilding the Belt and Road for a World Where Trade Routes Can Be Shut Down by War

- China’s Belt and Road Is Shifting from Building Infrastructure to Controlling the Rules That Govern It

- Southeast Asia Is Quietly Reshaping China’s Belt and Road by Refusing to Become Dependent on It

- China Is Not Building Ports Now It Is Building the Rules

- The Economic Tripwires Shaping Asia-Pacific Security in 2026

- China’s Space Yearender Is Not About Space. It Is About Industrial Sovereignty

- The Real Moon Race Is Not About Flags. It Is About Who Can Build a Lunar Order That Lasts

China, economy and industrial power

- Why Western Theory Still Struggles to Explain the Chinese Economy

- When Economic Analysis Becomes Narrative: A Case Study in China Doom-Writing

- China’s Bonds Are Acting Like a Haven Because the Inflation Shock Is Hitting the West Harder

- America Is Blocking Chinese EVs Because Too Many Consumers Would Want Them

- The Surplus Delusion: Why the Real Enemy Is Not Mercantilism but the Credit State

- Why AI Is Forcing Big Pharma to Turn to China

- The Cambrian Explosion of Robots Is Real and Most Will Die

China, military power and strategic corridors

- Fujian: The Carrier That Ends America’s Monopoly at Sea

- Luohe and the Escort Screen That Turns China’s Catapult Carriers Into Real Power

- Jiutian and the Geometry of Reach: China’s High Altitude Drone Carrier Across the Himalayas and the Pacific

- China and the Ukraine War, Where Drone Components Are Bought and Sourced

- Xi Jinping, Corruption, and the Chain of Command Inside China’s PLA

- The Arctic Is Becoming the World’s Most Strategic Trade Corridor, and Power Will Belong to Those Who Can Physically Keep It Open

- The Seabed Is Now a Battlefield. Europe Is Still Prosecuting It Like the 1980s

China, diplomacy and Britain

- The Scholar State in Global Competition: Wang Yi, Chinese Diplomacy and the Civilisational Divide

- Two Classrooms, Two Narratives. Why Britain and China Do Not Hear Each Other

- Why Kemi Badenoch Thinks Britain Still Has Leverage Over China

- Europe Without a Guarantor: Why Britain Is Reopening the China Question

- The Chinese Embassy Panic Is a Legal Failure, Not a Security One