AI Has Hit the Memory Wall

Artificial intelligence is no longer only a contest over smarter models. It is becoming a contest over memory, cables, racks, cooling, batching and the brutal physics of moving data fast enough to keep the machine alive.

This article explains the hidden machinery behind modern AI in plain language. It shows why faster answers cost more, why long context is expensive, why output tokens cost more than input tokens, why Nvidia racks matter, and why the next great AI breakthrough may not look like a cleverer chatbot, but like a better industrial system for moving memory.

The public sees a chatbot answering questions. The industry sees something colder: a vast industrial machine trying to move numbers fast enough to keep its expensive chips from standing idle.

That is the hidden story behind the next phase of artificial intelligence. The race is no longer only about making models cleverer. It is about feeding those models with memory quickly enough, batching users efficiently enough, and building hardware systems large enough to keep the whole machine moving.

The old image of AI was a brain. The better image now is a railway network attached to a warehouse, a power station and a port.

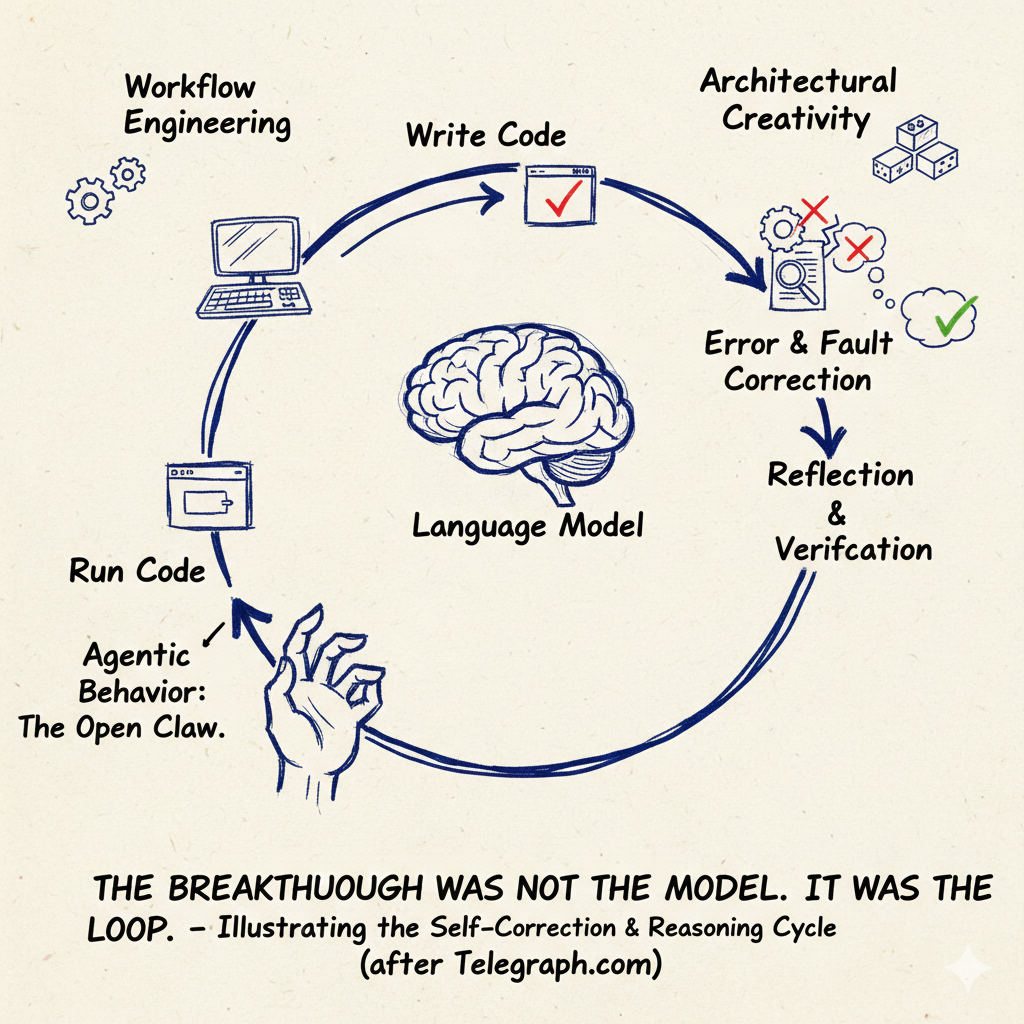

A prompt enters the system. It is broken into tokens. It joins other prompts from other users. It waits for a batch. It is pushed through a large language model. The model consults its weights, stores traces of the conversation in memory, generates the next token, then repeats the process again and again.

None of this feels like conversation inside the machine. It feels like logistics.

A token is a small piece of text used by an AI model. It may be a word, part of a word, punctuation mark or short fragment. The model does not really read sentences the way humans do. It processes streams of tokens and predicts the next token.

The most important thing to grasp is this: AI is expensive not simply because the model is large, but because the model must be moved through memory at enormous speed.

A powerful chip is useless if the information it needs cannot reach it quickly enough. That is the memory wall. The worker is fast, but the warehouse cannot deliver the goods quickly enough.

Academic work on systems such as vLLM makes the same point in more technical language: high throughput LLM serving requires batching many requests, but the KV cache for each request is large, dynamic and difficult to manage efficiently. The authors of the PagedAttention paper describe KV cache memory as a major obstacle to serving more requests at once.

An LLM, or large language model, is the type of AI behind systems such as ChatGPT, Claude and Gemini. It is trained on huge amounts of text and learns patterns that allow it to predict and generate language. It does not store knowledge like a library. It stores mathematical relationships in billions or trillions of numbers.

The layman’s mistake is to imagine that the model is sitting there thinking about your question as a single isolated event. It is not. Your request is usually being processed alongside many others.

That is batching.

Imagine a railway station. Every few milliseconds, a train leaves. The passengers are user requests. If the train is full, the journey is efficient. If it leaves half empty, the operator wastes capacity.

Fast mode means the train leaves sooner. You wait less. But the operator may send the train before it is full, so each passenger pays more. Slow mode would mean waiting longer until the train fills up, making each journey cheaper.

This is why AI companies can charge more for faster responses. They are not just selling intelligence. They are selling priority access to a very expensive transport system.

Batching means serving many users at the same time. Instead of running the model separately for each person, the system groups many requests together. The model’s huge weights are loaded once, then shared across the batch. This lowers cost per user but can add waiting time.

This is also why scale matters. A small AI provider may have the same model, but not enough traffic to keep the trains full. A giant provider with millions of users can fill batch after batch. That gives it better economics.

AI therefore has a natural centralising tendency. The more users you have, the more efficiently you can use the hardware. The more efficiently you use the hardware, the cheaper each token becomes. The cheaper each token becomes, the more users you can attract.

That is not the economics of old software. It is closer to airlines, railways, telecoms and electricity grids.

The model itself has two big jobs. First, it must calculate. Second, it must fetch memory. The public talks about the first. The bottleneck is increasingly the second.

The model’s weights are like the stored knowledge of the system: the huge set of numbers learned during training. But every time the model generates text, those weights must be accessed. If only one user is being served, that is wasteful. If thousands of users are being served together, the cost is spread across them.

That is why batching is so powerful.

But there is another memory burden, and it is one of the hidden monsters of modern AI: the KV cache.

The KV cache is the model’s working memory of the conversation. When the model reads earlier tokens, it stores internal traces called keys and values. These let the model refer back to the previous text without recomputing everything from scratch. The longer the conversation, the larger this memory burden becomes.

The KV cache explains why long context is expensive. A 200,000 token context window is not merely a bigger text box. It means the system must carry a much larger working memory while generating each new token.

If you paste a long legal bundle into a model, the model does not just “read it” once and forget the burden. It must keep enough internal representation available to answer in context. That costs memory. More precisely, it costs high speed memory bandwidth.

This is why long context windows have not expanded indefinitely. They rose sharply from a few thousand tokens to hundreds of thousands. But beyond that, the economics become harsh.

The FlashAttention paper, from researchers including Stanford’s Christopher Re, framed attention as an input and output problem: the key was reducing reads and writes between high bandwidth GPU memory and faster on chip memory. That is exactly the deeper theme here. The hard problem is not only arithmetic. It is moving data between memory layers efficiently.

Attention is the mechanism that lets a model decide which earlier tokens matter for the next token. If you ask a question about paragraph 12 of a document, attention helps the model connect the current answer to that earlier passage. Long attention is useful, but expensive.

This also explains why output tokens usually cost more than input tokens.

Input can be processed in parallel. The model can read many tokens together. But output is sequential. The model must generate one token, then use that token to help generate the next one.

It is like unloading a lorry versus writing a sentence. Many workers can unload boxes at once. But a sentence still has to be written word by word.

So the model can process your prompt relatively efficiently. But when it speaks back, each new token requires another pass through the machine.

That is why output is expensive.

Cached tokens are cheaper for a related reason. If the system has already processed a document or prompt, it may be able to reuse part of the internal memory rather than rebuilding it from scratch.

Think of a lawyer. Reading a 200 page bundle for the first time is expensive. But if the lawyer has already marked the key passages and kept the notes on the desk, returning to the same bundle is cheaper.

AI caching is the same idea, expressed in memory hardware.

Cached tokens are tokens the system has already processed and can partly reuse. If a long document or prompt has already been converted into internal memory, the model may not need to recompute everything. That can make repeated use cheaper.

Now comes the hardware story.

For years, people talked about GPUs as individual chips. That is now too small a unit. The real unit is increasingly the rack.

Nvidia’s GB200 NVL72 system is a rack scale design connecting 72 GPUs with high speed NVLink. Nvidia presents it as infrastructure for real time trillion parameter inference and mixture of experts models.

A GPU is a specialised chip used for AI computation. A 72 GPU rack is a cabinet sized system containing 72 such chips connected by very fast internal links. The point is not just having many chips. The point is letting them communicate quickly enough to behave like one large AI engine.

Inside a rack, chips can talk quickly. Across racks, communication is slower. That matters because modern models are often split across chips.

If a model has many “experts”, different parts of the model may sit on different GPUs. The system must route each token to the right experts and bring the result back. Inside one rack, that can be fast. Across racks, it can become a bottleneck.

This is why the future of AI depends on objects that sound boring: switches, cables, power delivery, cooling, memory bandwidth and rack topology.

The cloud is not a cloud. It is metal, electricity, heat, water and cables.

MoE means Mixture of Experts. Instead of using the whole model for every token, the system activates only selected expert parts. It is like a hospital with many specialists: not every patient needs every doctor. This saves compute, but creates a routing problem.

Mixture of Experts models are attractive because they reduce the amount of compute used per token. DeepSeek V3, for example, was reported as having 671 billion total parameters but only 37 billion activated for each token. That is the logic of sparse models: carry a vast institution, but call only the relevant departments.

The advantage is obvious. You save work.

The disadvantage is also obvious once you see it. Someone has to route the work. The experts must be placed somewhere. The chips must communicate. If the specialists are in the same building, fine. If they are scattered across the city, the patient waits.

That is the AI infrastructure problem in miniature.

The industry is therefore being pulled in two directions. Models want to get larger, more specialised and more capable. Hardware wants to keep communication local, dense and fast. The architecture of the model and the architecture of the machine increasingly have to fit each other.

That is one of the reasons Nvidia became so central. It is not merely selling chips. It is selling the nervous system of the AI factory.

The physical constraints are almost embarrassingly literal.

Why not connect millions of chips together at full speed?

Because the cables have to fit. The rack has weight limits. The power has to be delivered. The heat has to be removed. The switches have limits. The connectors have density limits. The building has electricity limits.

The future of artificial intelligence may be constrained by cable geometry and cooling loops.

This sounds absurd only if one still thinks of AI as pure software. It is not. Frontier AI is now heavy industry.

That changes the economics.

The old software dream was that a small team with laptops could build a global company. Frontier AI looks less like that. It looks more like oil refining, telecoms, aviation or semiconductor manufacturing. Capital matters. Infrastructure matters. Supply chains matter. Access to power matters.

The clever model remains important. But the model sits inside a machine that only a few players can afford.

This is the strategic consequence: AI may become more centralised, not less. Open source models may spread. Local models may improve. Smaller systems will be useful. But the frontier, where the largest models operate with the longest context and the fastest response times, is structurally biased toward the companies that own the biggest infrastructure.

The popular debate asks whether AI will become conscious, dangerous, biased or superhuman. Those are serious questions. But underneath them sits a more immediate question:

Can the industry keep moving memory fast enough?

That question may decide the next phase of AI before philosophy does.

The next great AI breakthrough may not look like a smarter chatbot. It may look like a better rack, a denser cable system, a cheaper memory hierarchy, a more efficient batching engine, or an attention mechanism that reads less while understanding more.

The public will experience it as faster answers, longer context and lower prices.

The industry will experience it as fewer wasted memory reads, fuller batches, better cache reuse and higher utilisation.

The story of AI is therefore becoming the story of logistics. The model predicts words. The factory moves memory. The winner is the company that can do both at scale.