AI’s next moat is no longer scale alone

The first phase of the AI boom rewarded those who could copy a successful recipe and pour in more compute. The next phase looks harsher. Durable advantage is likely to belong to the labs that can combine enormous computing power, concentrated research talent, and enough institutional discipline to solve what today’s systems still do badly: memory, continual learning, long horizon planning, and consistency.

The earlier version of the AI race was comparatively simple. A workable formula emerged. Train larger transformer models on more data, add more compute, refine the product surface, and ship. That formula still works. It is still producing gains. But the easy part of that playbook has largely been harvested. The question now is not just who can scale the known recipe, but who can still improve the recipe itself.

That sounds abstract until you look at what current systems can and cannot do. On narrow, well specified technical tasks, frontier models have become startlingly good. In software engineering, the leading systems are now resolving large shares of benchmark tasks that were out of reach not long ago. In coding and tool use, progress has been real, fast, and commercially meaningful. But the same models still struggle when the task becomes longer, messier, more contextual, and more dependent on memory across time.

The split is already visible

Leading models now score very highly on tightly defined coding benchmarks. That is real progress.

But on longer horizon professional work, the best systems are still nowhere near dominant. The most important divide in AI is no longer raw fluency. It is whether a system can hold context, adapt, and remain coherent over time.

This is where the idea of the next moat starts to make sense. Compute is not just fuel. It is the workbench. A frontier lab does not need enormous computing capacity only to train a flagship model. It needs that capacity to run repeated expensive experiments, test fragile ideas at meaningful scale, discard dead ends, and then try again. A smaller lab may still publish clever papers or produce an impressive demo. What it often cannot do is validate enough large scale hypotheses quickly enough to stay at the edge.

That is why organisational concentration matters. Once the first scaling wave matures, frontier progress depends less on having one clever idea than on having a machine that can repeatedly generate, test, discard, and operationalise ideas. This is not only a technical advantage. It is an institutional one. It rewards labs that can concentrate researchers, compute, tooling, and managerial focus behind a single research agenda.

The concrete technical gaps are no longer mysterious. The models are strong, but patchy. They can reason well in one framing and then fail oddly when the task is rephrased. They can process vast context windows and still mishandle what matters. They can complete many short horizon tasks, yet lose the thread on extended workflows where earlier decisions must shape later ones. That is why memory and continual learning have become central research fronts rather than niche add ons.

And the race is already under way. Google has pushed two named lines of work in this direction. Nested Learning is framed as a way to mitigate catastrophic forgetting by treating learning as a set of nested optimisation problems rather than one flat process. Titans and MIRAS push test time memorisation, updating a model’s memory while it is running rather than relying only on offline retraining. Anthropic’s contextual retrieval work attacked a related bottleneck from another angle, reporting a 49 percent reduction in failed retrievals, rising to 67 percent when combined with reranking. The point is not that any one of these has solved the problem. The point is that the frontier has already shifted from “make it bigger” to “make it remember, adapt and stay coherent.”

What the missing pieces look like in practice

A useful memory system is not just a longer context window. It must decide what to store, what to recall, what to compress, and what to forget.

A useful continual learning system is not just more training. It must absorb new information without wrecking what it already knows.

It would be too neat to say that only a tiny cartel now matters. The evidence does not support that. Open weight models have closed the gap sharply. Stanford’s 2025 AI Index reported that the performance difference between leading closed and open weight models on some benchmarks fell from 8.0 percent to 1.7 percent in a year. That is not a trivial narrowing. It means diffusion remains powerful. It also means large labs do not get to declare victory merely because they are expensive.

There is another reason the cartel thesis is too crude: inference economics. Even if a giant lab invents the next architectural advance, that does not automatically decide the commercial winner. Stanford also found that the cost of querying a model at roughly GPT 3.5 level fell from $20 per million tokens in late 2022 to $0.07 by October 2024. That is a collapse in cost, not a marginal improvement. In practical terms, it means distribution, workflow embedding, and operational fit remain moats of their own. A lab can invent the breakthrough. Another company can still be the one that makes it cheap, reliable, and unavoidable inside real work.

The telegraph.com does pretend the future is settled. our more defensible claim is hierarchical, not deterministic. Open models are not dead. Scale is not over. Inference economics matters. Product integration matters. Workflow dominance matters. But the labs with the best long term position may still be the ones that can do all of these at once while also discovering the next missing primitive.

The recent benchmark picture makes that tension plain. The best systems now post strong numbers on coding and long context evaluations. OpenAI’s latest public release claims marked gains in long horizon instruction following, tool use, and long document reasoning. Yet APEX-Agents, which tests cross application work in law, banking, and consulting, reported only 24 percent Pass@1 for its top model in the original benchmark. METR’s time horizon work makes the same broader point in another form: capability is improving, but it remains jagged, domain specific, and much weaker on messy real work than on cleanly scored tasks.

That gap is what telegraph.com AI analysts assert is the real subject. The next race is not only about intelligence in the abstract. It is about what sort of products become possible if these missing pieces are solved. A personal AI that actually remembers your preferences without drifting or inventing. Agents that can manage week long software or research tasks without losing context. Scientific systems that do not just answer questions, but build and refine lines of inquiry over time. That is the concrete surface area of the next phase.

A test for the thesis

This argument is falsifiable.

If by the end of 2027 no leading lab has shipped a frontier system that can learn continually, preserve coherence across extended tasks, and hold up on messy professional benchmarks rather than only clean technical ones, then the imitation phase may have more life left in it than this thesis assumes.

That also connects the political question more tightly to the technical one. If the next gains depend on giant compute pools, elite research teams, and expensive experimentation, then the economic rents are likely to concentrate before the benefits spread. The distribution problem does not come after the technical breakthrough. It is built into the technical structure of the race itself. The same institutions that can afford the next memory and continual learning breakthroughs are also the ones most likely to capture the value unless states, firms, and markets alter the distribution path.

So the cleaner conclusion is not that the frontier has closed. It has not. Nor is it that open models are a sideshow. They are not. It is that AI is moving out of its imitation phase. The next durable edge will probably belong to the organisations that can keep inventing after scaling becomes more expensive, more power hungry, and less sufficient on its own. The first wave rewarded those who could copy and enlarge. The next wave is likely to reward those who can remember, adapt, and stay coherent at scale.

You might also like to read on Telegraph.com

A thematic reading map of Telegraph.com AI coverage, grouped by the main fault lines now shaping the field: agents, infrastructure, market power, labour, China, law, and the social effects of machine systems.

- The Age of the AI Operator: Why 2026 Marks the Shift From Chatbots to Autonomous Agents

- The Breakthrough Was Not the Model. It Was the Loop.

- The First Non Human Economy Is Being Built by AI

- The Jarvis Layer: Why the Most Dangerous AI Is Not the Smartest One, but the One Closest to You

- OpenClaw, Moltbook, and the Legal Vacuum at the Heart of Agentic AI

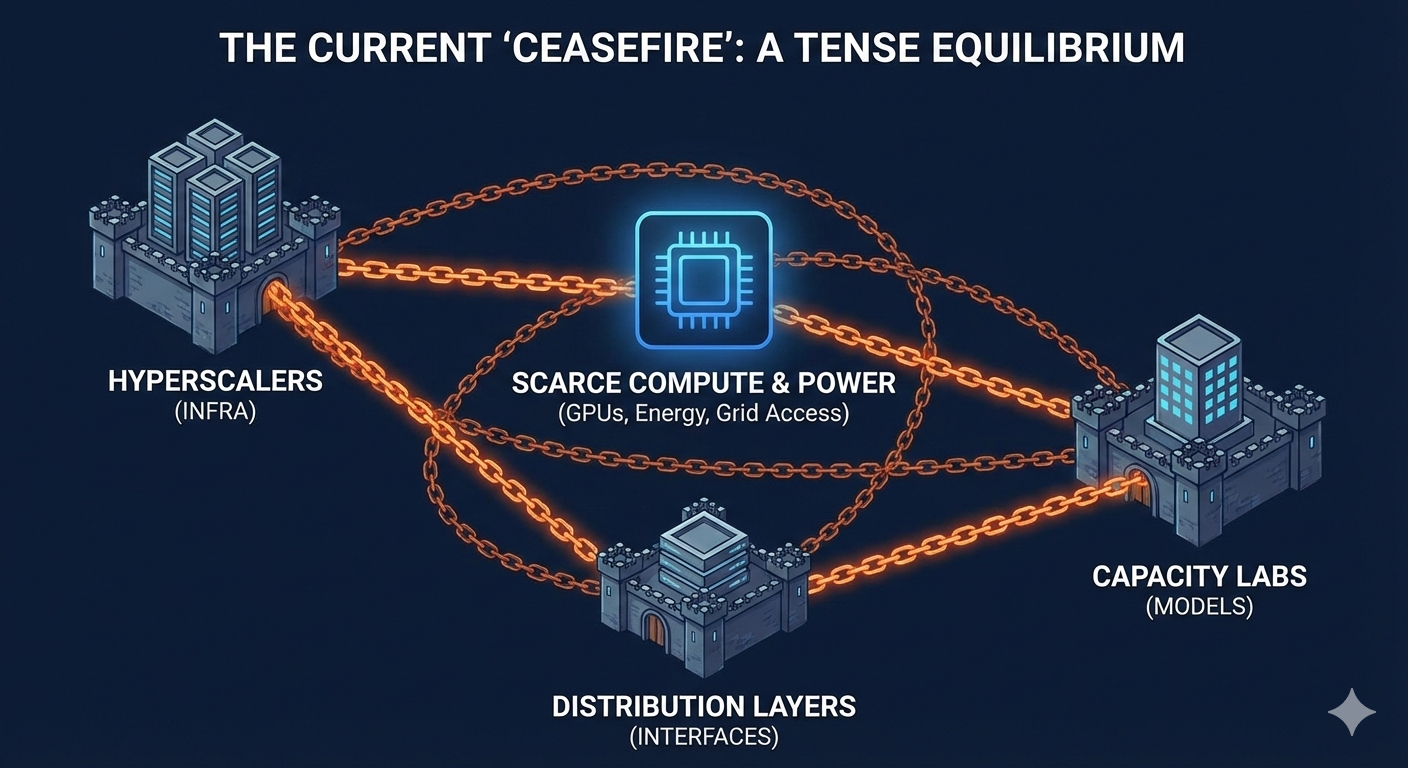

- The Compute Détente: Why Big Tech Is Buying Everyone and Why It Will Not Last

- AI Driven Data Centre Growth Is Colliding with Transformer Shortages and Raising the Risk of Prolonged Electricity Rationing in Britain

- Elon Musk Moves xAI Into SpaceX as Power Becomes the Binding Constraint on Artificial Intelligence

- The Cambrian Explosion of Robots Is Real and Most Will Die

- The Quiet AI Revolution No One Noticed Until It Was Everywhere

- AI Is Raising Productivity. Britain’s Economy Is Absorbing the Gains

- AI Is Raising Productivity. That Is Not the Same Thing as Raising Prosperity

- The Consulting Pyramid Is Breaking and McKinsey Just Admitted It

- The End of Rented Software: How Artificial Intelligence Breaks the Subscription Model

- Why Artificial Intelligence Is Breaking GDP and What Comes After

- AI Is Reordering the Labour Market Faster Than Education Can Adapt

- India’s AI Reckoning: When Intelligence Becomes Cheaper Than Labour

- Sadiq Khan Warns of Mass Unemployment. AI Poses a Deeper Threat to London

- AI Will Not Just Take Jobs. It Will Break Identities

- From Lecture Hall to Algorithm: How AI Is Rewriting Authority

- China’s AI Governance Model vs America’s Frontier Race: Why the Real Battle Is Over Who Can Control Intelligence at Scale

- China Is Not Trying to Beat Western AI. It Is Trying to Replace the Interface

- Why AI Is Forcing Big Pharma to Turn to China

- China’s Open AI Models Could Puncture the Artificial Intelligence Bubble

- AI Is Breaking the University Monopoly on Science

- The AI Safety Race Has Collapsed as Companies Admit They Cannot Afford to Slow Down

- Why the Fight Over Defining AGI Is the Real AI Risk

- Why Treating AI as a Friend or Confidant Is a Dangerous Mistake and How It Can Lead, in the Worst Cases, to Suicide

- Mamdani’s Win Shows How Human Contact Can Defeat the Algorithm and the Chatbot

- Getty Defeat and Meta Fair Use Win Signal Shift in AI Copyright Battles