The Age of the AI Operator: Why 2026 Marks the Shift From Chatbots to Autonomous Agents

As of March 2026, the most important development in artificial intelligence is no longer that models are getting slightly better at chat. The real change is structural. AI systems are beginning to operate software environments, call tools, coordinate specialist agents, and complete multi step workflows while humans supervise goals rather than execute every action themselves. This article explains why that shift matters. It argues that the AI revolution has entered a new phase in which the key transformation is not conversational intelligence but operational intelligence. The central conclusion is simple: the real story of AI in 2026 is the emergence of software systems that artificial intelligence can operate autonomously.

- The rise of AI operators capable of executing multi step tasks.

- The emergence of agent workspaces where specialised AI systems collaborate.

- The shift from humans operating software to humans supervising intelligent systems.

The Structural Change In Computing

The real story of artificial intelligence in 2026 is not that chatbots have become a little more fluent, a little faster, or a little more useful. It is that software is starting to change shape. For the first time, major AI systems are not merely answering questions inside a chat box. They are beginning to operate software environments directly, call tools, coordinate specialist agents, and complete multi step workflows with humans supervising goals rather than executing every step themselves. OpenAI is explicitly positioning GPT 5.4 as its most capable model for professional work, with stronger coding, long context handling, better factual reliability, and state of the art desktop navigation performance on its cited benchmark. At the same time, OpenAI’s official developer stack now includes an Agents SDK and broader agent tooling aimed at handing work across tools and specialist agents while preserving traces and control layers for production use.

That is the structural change. The familiar model of computing has been simple for decades. A human opens software, clicks through menus, enters commands, and extracts a result. The software is passive. The user is active. What is emerging now is a different arrangement. The human states the objective. The AI plans the steps, calls the tools, works through the task, and returns with an output, or increasingly with a partially completed system. The software no longer merely waits for human hands. It is being approached as an environment that a machine can navigate.

The End Of The Chatbot Era

This is why so much current commentary about AI feels oddly shallow. Public debate still treats the field as though it were essentially an argument about whether the chatbot is smarter this month than it was last month. That was the correct debate in the first phase of generative AI. It is no longer the correct one. The question now is not whether the machine can write a competent paragraph. The question is whether it can operate a browser, read a screen, use a coding tool, query a database, hand a task to a specialist sub agent, and keep enough context intact to finish what it started.

OpenAI’s own material now makes plain that this is the direction of travel. GPT 5.4 is framed not as a conversational novelty but as a model for professional work, coding, and multi step automation. The company says it has improved performance in spreadsheets, presentations, and document work, reduced error rates relative to GPT 5.2, and pushed computer use further, including screenshot based desktop navigation and keyboard and mouse actions. Its developer documentation for GPT 5.4, agents, and prompt guidance repeatedly describes production grade assistants, tool use expectations, and reliable long context performance rather than mere conversation.

The Rise Of The AI Operator

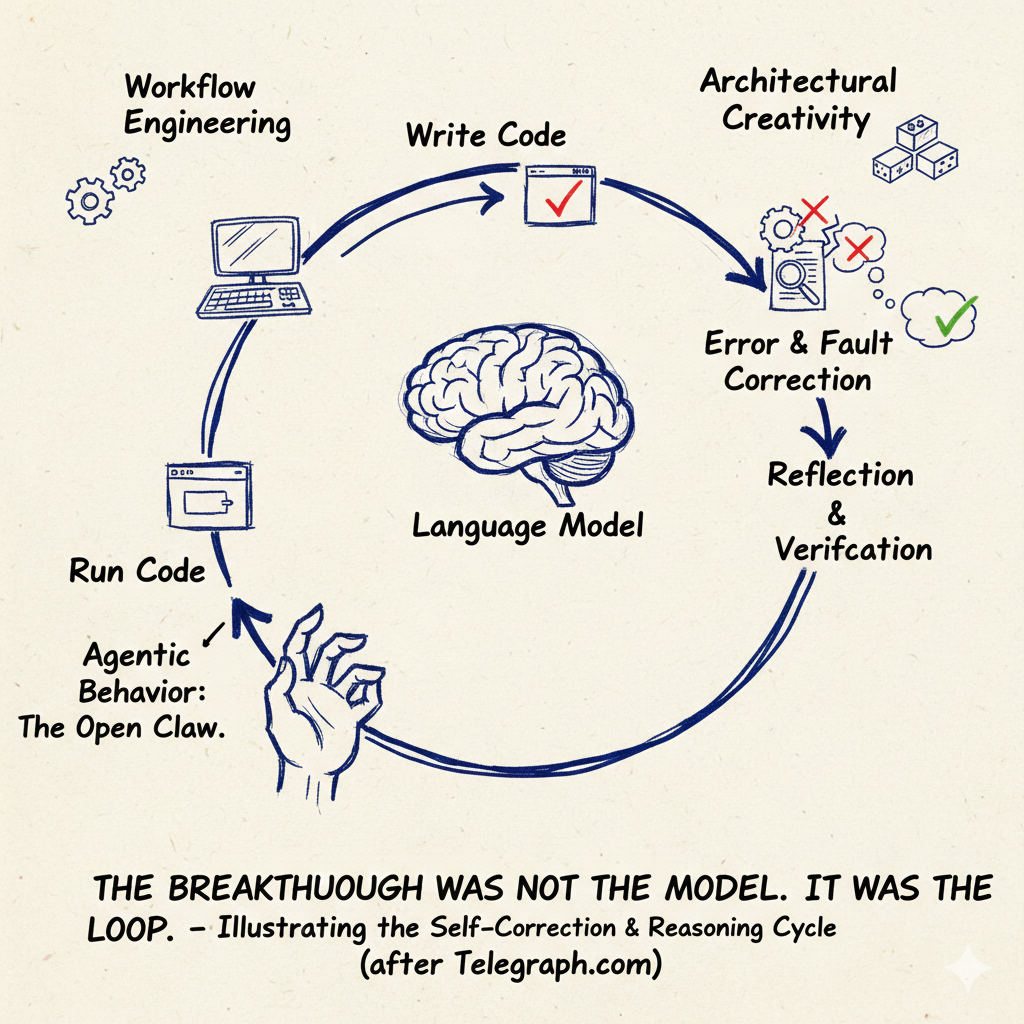

The distinction matters because it marks the rise of what can fairly be called the AI operator. Earlier generations of consumer AI were interpreters. They explained things, summarised things, drafted things, and occasionally hallucinated things. The human still had to do the work. If the model suggested a website layout, the user still had to build the page. If it recommended a technical fix, the user still had to implement it. If it proposed a workflow, the user still had to stitch the software together. What the Telegraph.com’s own research shows again and again is a different loop. The user gives a goal. The model plans. The model executes. The model checks. The model revises. Whether the job is building a simple app, searching for information, wiring up an automation, or debugging a visual error, the same cycle is visible: goal, plan, execute, verify, revise.

That is a qualitative shift, not a quantitative one. It is the difference between a calculator and a junior operator. Not an infallible operator, certainly not a fully autonomous one, but an operator nonetheless.

The Emergence Of Agent Workspaces

A second transformation follows from the first. Once AI becomes capable of operating software, developers stop building only software products and start building workspaces for AI collaborators. This is already visible in the engineering culture emerging around these tools. The interesting artefacts are no longer just source files. They are plan files, skills, tool definitions, traces, handoffs, guardrails, context stores, and agent instructions. OpenAI’s Agents SDK is built around exactly these concepts. In its own documentation, the point is not just to call a model. It is to build systems in which a model can use additional context and tools, hand work to specialised agents, stream partial results, and preserve a full trace of what happened.

That is why the phrase agent workspace is more useful than the older language of prompt engineering. Prompt engineering always sounded temporary because it was temporary. It belonged to an era when the main problem was persuading a text model to produce a better answer. The new problem is architectural. Which agent should handle this task. Which tool should it call. Which knowledge should it retrieve. What should trigger it. When should it stop. What should happen if the request is unsafe, unrealistic, or too expensive. How should a human intervene. These are not prompt questions. They are systems questions.

The Stack Of Agentic AI

The Telegraph.com’s own research also makes clear that agentic AI is not one thing but a stack. At the bottom sits the model itself, trained on a broad body of knowledge. Above that comes retrieval, giving the system access to current or private information beyond its base training. Above that comes tooling, the ability to call an API, use a browser, run code, or interact with an application. Above that comes planning, where the system works out how to achieve a goal rather than following a rigid prewritten flow. And above that comes orchestration, where multiple agents, tools, and triggers are coordinated into a workable system. That stack matters because it explains why the field now feels so different. We are no longer looking at one model speaking in isolation. We are looking at models embedded in software structures that allow them to act.

The Human Becomes The Supervisor

This leads to the third transformation, and perhaps the most consequential one. Humans are shifting from software operators to software supervisors. The old mental model of digital work was mechanical. Open the app. Press the buttons. Enter the values. Export the file. The new mental model is managerial. Define the goal. Check the constraints. Review the result. Redirect the process when it drifts.

That does not mean humans disappear. In fact the Telegraph.com’s own research suggests the opposite. Human supervision remains essential precisely because these systems are now powerful enough to do consequential work badly. The most revealing demonstrations are not the glossy success stories but the messy debugging stories. An agent builds something that almost works. The human notices that the real problem is not where the model thinks it is. A visual issue turns out to be a layering problem. A software bug turns out to be a false architectural assumption. A travel planner needs a guardrail because otherwise it will calmly hallucinate a week in Dubai for three hundred dollars. A swarm of agents becomes productive, then chaotic, then exhausting, unless someone imposes structure.

A New Engineering Discipline

This is where the serious articles on AI often fail. They move too quickly from capability to inevitability. They take a clever demo and treat it as settled reality. The material you gathered points to a more defensible conclusion. AI operated systems are real, but they are not mature in the sense that a power grid is mature or a database engine is mature. They are probabilistic, often brittle, context hungry, and in need of guardrails. Even proponents keep returning to the same themes: logging, tracing, testing, handoffs, review loops, explicit constraints, better instructions, better tool descriptions, better memory, cleaner decomposition of work. Those are not the signs of a finished revolution. They are the signs of a new engineering discipline being born.

The Economic Consequences

Still, that discipline is already changing the economics of software work. The most important competitive effect may not be that large firms become infinitely more efficient. It may be that small teams become disproportionately more dangerous. This pattern has appeared before in technology. A new substrate appears. The incumbents talk loudly. The real innovation happens first at the edge. The web did not look most alive inside the old giants. Nor did mobile. Nor, in truth, did cloud in its earliest entrepreneurial uses. Agentic systems may produce the same pattern. A team of five with strong product sense, access to frontier models, and a willingness to build openly may now prototype, test, revise, and ship at a pace that would once have required a much larger organisation.

That does not mean the large companies vanish. It does mean many of them may struggle to absorb the productivity of their own engineers. Big firms are not only limited by technical ability. They are limited by process, appetite for risk, legal review, organisational politics, and the sheer friction of coordinating large internal systems. If agents speed up execution but the institution cannot speed up decision making, then the gain leaks away. The code can move faster than the company.

The Human Cost Of Acceleration

There is also a human cost to this transition that deserves more attention than it gets. One of the strongest themes in the interviews is not triumph but exhaustion. AI can accelerate output, but it can also increase cognitive drain. When easy tasks are automated away, human time shifts toward steering, checking, decomposing, arbitrating, and deciding. These are mentally expensive activities. The result can be a strange mix of exhilaration and depletion. Engineers produce more, experiment more, and often feel more capable, yet also more stretched. The cultural rules for this are not settled. If a person becomes radically more productive with AI, who captures the value. The firm. The worker. Both. Neither cleanly. That argument has barely begun.

The Future Of Software

The deeper significance, though, lies beyond the workplace. If AI systems can operate software, then software itself becomes more fluid. Personal software, bespoke workflows, rapid forks, ephemeral tools, narrow use case applications, and one off interfaces all become easier to create. The old scarcity model of software rested on the fact that building even mediocre products took meaningful teams, meaningful time, and meaningful budgets. Agentic systems erode that scarcity. They do not abolish it, because maintenance, architecture, trust, compliance, and distribution still matter. But they erode it enough to change the shape of the market.

The best way to understand 2026, then, is not as the year the chatbot improved. It is the year the abstraction ladder moved again. Computing is starting to leave behind an era in which humans directly drove every interface. In its place is emerging a world in which humans define aims and machine systems navigate the digital terrain on their behalf. The real story is not chat. It is operation. Not better answers, but software that can increasingly act. Not the disappearance of human judgement, but its relocation upward, away from the button press and toward the command, the constraint, the review, and the decision.

That is the structural change now underway. And once you see it in those terms, it becomes very hard to unsee.

You might also like to read on Telegraph.com

The Economics of Artificial Intelligence

- AI Is Raising Productivity. Britain’s Economy Is Absorbing the Gains

- Why Artificial Intelligence Is Breaking GDP and What Comes After

- AI Is Raising Productivity. That Is Not the Same Thing as Raising Prosperity

- India’s AI Reckoning: When Intelligence Becomes Cheaper Than Labour

- AI and London’s Future: The Erosion of the Middle Class

The Architecture of AI Systems

- The Breakthrough Was Not the Model. It Was the Loop.

- OpenClaw, Moltbook, and the Legal Vacuum at the Heart of Agentic AI

- The Jarvis Layer: Why the Most Dangerous AI Is the One Closest to You

- The End of Rented Software: How Artificial Intelligence Breaks the Subscription Model

- AI Is Making Cognition Cheap Faster Than Economies Can Adjust

Power, Data and Governance

- The Quiet Land Grab Behind AI: Training Data and Who Gets Paid

- The Colonial Mirror: How Western Data Shapes Global AI

- Who Gets to Train the AI That Will Rule Us

- Artificial Intelligence in China: A New Law Forces Transparency

- China’s Open AI Models Could Puncture the Artificial Intelligence Bubble

The Social Impact of Artificial Intelligence

- AI Will Not Just Take Jobs. It Will Break Identities

- From Lecture Hall to Algorithm: How AI Is Rewriting Authority

- AI, Manipulation, and the Strange Loop

- Strange Loops in AI

- AI and London’s Middle Class

Strategy and the Global AI Race