AI is making execution cheap, forcing software to shift from building code to deciding what to build

AI is not just getting better at answering questions. It is making execution cheap. Once software can be drafted, prototyped, tested and iterated at far lower cost, the real bottlenecks move upward: judgment, trust, workflow design, permissions, and control over the environment in which real work happens.

The next AI disruption is not merely that models are getting smarter. It is that execution is becoming cheap. Once teams can generate code, prototypes, workflows and task chains at a fraction of the old cost, software stops being constrained mainly by the supply of coders and starts being constrained by something else: taste, trust, workflow design, permissions, and control over the environment in which real work happens.

Why this matters now

For years the AI debate focused on intelligence in the abstract: could the model reason, code, summarise, or answer better than a human. That is no longer the whole question. The more immediate commercial shift is that AI is reducing the cost of trying things. Once execution gets cheaper, the scarce resource is no longer merely building the first version. It is deciding which version is worth trusting, keeping, governing, and scaling.

The most important AI shift is no longer that models can answer questions better. It is that they are making execution cheap. Once that happens, software stops being constrained mainly by the supply of coders and starts being constrained by something else: judgment, interface design, trust, workflow, and control over the environment in which work is actually done.

That is the real significance of the claims now coming out of Anthropic and its rivals. Anthropic’s own language around Claude Mythos Preview is dramatic: it says the unreleased model has reached a level of coding capability that can surpass all but the most skilled humans at finding and exploiting software vulnerabilities, and it has launched Project Glasswing with companies including Amazon, Apple, Cisco, Google, JPMorganChase, Microsoft and the Linux Foundation to use the model defensively. At the same time, outsiders cannot independently verify the full scale of those claims, and critics have already accused Anthropic of mixing safety signalling with publicity. Both things can be true at once: the capability jump may be real, and the marketing may still be doing heavy work.

The core claim

AI is making execution cheap, and once execution gets cheap, software stops being constrained by code and starts being constrained by taste, trust, workflow, and control.

The cleaner way to understand the moment is through a simple framework: claim, mechanism, example, consequence.

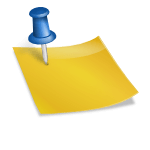

The claim is that AI is making execution cheap. The mechanism is that models can now generate code, test ideas, draft interfaces, connect to tools, and sustain longer multi-step tasks with less human supervision than before. Anthropic’s Managed Agents describes itself as a meta-harness designed to accommodate different sandboxes and task-specific harnesses around Claude, while Claude Code is explicitly sold as a system that can read a codebase, make changes across files, run tests, and deliver committed code. OpenAI, from the other side of the market, has been making the same underlying point in plainer terms: users now trust autonomous coding agents to follow instructions for longer, more reliable task runs.

Once execution gets cheaper, the scarcest thing is no longer the first draft of the software. It is choosing which version is worth keeping.

Example one: product design and prototyping

Imagine a product manager in 2023 who needed engineering time to test three interface ideas for a customer dashboard. In 2026, the same manager can sketch one in Figma Make, prompt another in GitHub Spark, and ask Replit Agent for a third. The bottleneck is no longer “can we build this mock-up by next month?” It is “which of these versions actually solves the user’s problem, and which one will create a mess later?” The work shifts upward from construction to selection.

You can already see that shift in consumer and enterprise tools. GitHub Spark says users can build apps with natural language or code and get a live interactive preview. Figma Make says users can start with a design and prompt their way to a functional prototype fast, and Figma’s own launch language is telling: it frames the product as a way to democratise the power of prototyping, because traditional prototyping is too slow or too inaccessible. Replit sells the same promise even more bluntly, telling users to describe an app idea and watch it get built and deployed. These are not niche research demos. They are commercial products built around the proposition that implementation, once the expensive part, is being commoditised.

That is the first real-world example for readers: once multiple working versions can be produced quickly, value shifts away from mere production and toward judgment. Someone still has to decide which version should survive contact with customers, compliance, maintenance burdens, and the long tail of failure.

Example two: legal work

The old fantasy was that AI would replace the lawyer. The reality is narrower and more disruptive. AI now makes first-pass execution cheaper: clause searching, issue spotting, draft review, redlining suggestions. But that does not eliminate legal judgment. It increases the premium on it. Once the machine can find the clause in seconds, the real question becomes whether the clause matters, how much risk it carries, and whether the institution can trust the system handling confidential material in the first place.

The legal field shows the shift clearly. Thomson Reuters says AI contract review can dramatically improve efficiency in clause searching, deadline management and review workflow, but stresses that reliability still depends on domain-specific legal data and human oversight. Spellbook, a Microsoft Word add-in, describes the practical version of that future: the contract stays in Word, the model performs a first-pass review, flags risks, suggests redlines, and returns in minutes what manual review often takes hours to produce. Spellbook also states the obvious point many evangelists prefer to blur: AI does not replace the associate; it shifts the associate toward quality control and strategic review.

That is execution getting cheap in a profession that bills by judgment. The value does not disappear. It relocates. Junior legal labour is less valuable when the machine can do the first pass in seconds; senior judgment becomes more valuable because someone still has to decide whether the flagged issue is truly dangerous, commercially material, or merely noise. In other words, the constraint is no longer typing. It is competence, supervision, and trust.

Example three: healthcare administration

Healthcare offers a cleaner example than the grandiose claim that AI will replace clinicians. What AI is already doing is making clerical execution cheaper: transcription, note drafting, documentation, workflow support. The machine takes on repetitive administrative labour. The institution remains responsible for privacy, governance, and clinical accountability.

This is why healthcare administration matters. NHS England’s guidance on AI-enabled ambient scribing does not frame these systems as autonomous doctors. It frames them as tools for clinical or patient documentation and workflow support. That is exactly where cheap execution bites first: repetitive clerical work, not the ultimate judgment call. The machine can draft, transcribe, organise and summarise; the institution still has to govern risk, privacy, and clinical responsibility. Again the bottleneck shifts. Once note production becomes cheaper, the scarce resource is not text generation but safe adoption inside a real system.

Why local control matters

The local-versus-cloud fight is not a theological dispute. It is a control problem. Who holds the files, the browser session, the permissions, the secrets, the audit trail, the approval flow? As execution gets cheaper, the trust boundary becomes the real product.

This is why the local-versus-cloud fight matters more than it first appears. Anthropic is now pushing both directions at once. Claude Code on the web runs in Anthropic-managed cloud infrastructure, cloning repositories into isolated virtual machines and letting users review the resulting branch from another device. At the same time, Anthropic’s broader tooling push, including MCP, is built around connecting models to external data sources and tools securely. The issue is not theology about cloud versus laptop. It is control. Who holds the files, the permissions, the browser session, the audit trail, the secrets, the approval flow? As execution gets cheaper, the trust boundary becomes the real product.

This is also where much AI commentary still goes wrong. It talks as if smarter models automatically create better products. They do not. Plenty of firms will be able to generate code. Far fewer will know how to shape it into something people will actually trust in law, banking, medicine, procurement, or internal operations. The winning systems will not simply be those with more intelligence. They will be those that fit into the user’s real workflow, reduce switching costs, preserve security boundaries, and ask for trust in increments rather than as a blind leap.

The real consequence

Software is not about to disappear. It is about to proliferate. There will be more prototypes, more internal tools, more task-specific systems, more disposable applications, and far more software made by people who are not classical engineers. Once that becomes normal, the premium moves away from writing syntax and toward understanding humans, institutions, incentives, and failure modes.

That is the larger consequence. Software is not about to disappear. It is about to proliferate. There will be more prototypes, more internal tools, more task-specific systems, more disposable applications, and far more software made by people who are not classical engineers. GitHub says you do not need to know how to code to build with Spark. Replit says no-code users can describe an app and have it built. Anthropic says Claude Code is now an entry point to software development for builders without an engineering background. Once that becomes normal, the premium moves away from writing syntax and toward understanding humans, institutions, incentives, and failure modes.

So the right way to read this moment is not: AI is learning to code. The right way to read it is harsher.

AI is making execution cheap. When execution gets cheap, code stops being the main bottleneck. Taste, trust, workflow and control take its place.

That is why this changes software. And it is why the people still talking as if the whole contest is about model IQ are already behind.

Key Sources

- The 2026 AI Index Report, Stanford HAI, April 2026. Source: hai.stanford.edu

- Project Glasswing: Securing critical software for the AI era, Anthropic, April 2026. Source: anthropic.com

- Scaling Managed Agents: Decoupling the brain from the harness, Anthropic Engineering, April 2026. Source: anthropic.com

- About GitHub Spark, GitHub Docs. Source: docs.github.com

- GitHub Spark: Dream it. See it. Ship it., GitHub. Source: github.com

- Figma Make: Create with AI-Powered Design Tools, Figma. Source: figma.com

- Replit: Build apps and sites with AI, Replit. Source: replit.com

- Introducing GPT-5.3-Codex, OpenAI, February 2026. Source: openai.com

- Guidance on the use of AI-enabled ambient scribing products in health and care settings, NHS England. Source: england.nhs.uk

- Buyer’s guide: AI for legal contract review and analysis, Thomson Reuters, October 2025. Source: legal.thomsonreuters.com

- How Lawyers Use AI to Review Contracts, Spellbook, April 2026. Source: spellbook.legal

You might also like to read on Telegraph.com

A thematic reading map of Telegraph.com’s AI coverage, grouped around the main fault lines shaping the field: agents, infrastructure, market power, labour, China, and law.

Agents, operators, and the shift from chat to action

- The Age of the AI Operator: Why 2026 Marks the Shift From Chatbots to Autonomous Agents

- The Breakthrough Was Not the Model. It Was the Loop.

- The First Non Human Economy Is Being Built by AI

- The Jarvis Layer: Why the Most Dangerous AI Is Not the Smartest One, but the One Closest to You

- OpenClaw, Moltbook, and the Legal Vacuum at the Heart of Agentic AI

Compute, power, and the hard physical limits of the boom

- The Compute Detente: Why Big Tech Is Buying Everyone and Why It Will Not Last

- AI Driven Data Centre Growth Is Colliding with Transformer Shortages and Raising the Risk of Prolonged Electricity Rationing in Britain

- Elon Musk Moves xAI Into SpaceX as Power Becomes the Binding Constraint on Artificial Intelligence

- The Cambrian Explosion of Robots Is Real and Most Will Die

- The Quiet AI Revolution No One Noticed Until It Was Everywhere

Market structure, business models, and who captures the gains

- AI’s next moat is no longer scale alone

- AI Is Raising Productivity. Britain’s Economy Is Absorbing the Gains

- AI Is Raising Productivity. That Is Not the Same Thing as Raising Prosperity

- The Consulting Pyramid Is Breaking and McKinsey Just Admitted It

- The End of Rented Software: How Artificial Intelligence Breaks the Subscription Model

- Why Artificial Intelligence Is Breaking GDP and What Comes After

Labour, class, education, and the erosion of old ladders

- AI Is Reordering the Labour Market Faster Than Education Can Adapt

- India’s AI Reckoning: When Intelligence Becomes Cheaper Than Labour

- Sadiq Khan Warns of Mass Unemployment. AI Poses a Deeper Threat to London

- AI Will Not Just Take Jobs. It Will Break Identities

- From Lecture Hall to Algorithm: How AI Is Rewriting Authority

- AI Is Breaking the University Monopoly on Science

China, governance, and the geopolitical split in AI

- China’s AI Governance Model vs America’s Frontier Race: Why the Real Battle Is Over Who Can Control Intelligence at Scale

- China Is Not Trying to Beat Western AI. It Is Trying to Replace the Interface

- Why AI Is Forcing Big Pharma to Turn to China

- China’s Open AI Models Could Puncture the Artificial Intelligence Bubble

Safety, law, persuasion, and the social consequences

- The AI Safety Race Has Collapsed as Companies Admit They Cannot Afford to Slow Down

- Why the Fight Over Defining AGI Is the Real AI Risk

- Why Treating AI as a Friend or Confidant Is a Dangerous Mistake and How It Can Lead, in the Worst Cases, to Suicide

- Mamdani’s Win Shows How Human Contact Can Defeat the Algorithm and the Chatbot

- Getty Defeat and Meta Fair Use Win Signal Shift in AI Copyright Battles