Dario Amodei is not warning about coding alone. He is describing a fight over who will control the operating system of modern work

What this article argues

First, coding is not the endpoint but the opening breach: the first major knowledge workflow frontier models are absorbing at scale.

Second, the real contest is not over consumer apps but over who controls the cognitive infrastructure beneath modern work.

Third, Dario Amodei is selling both abundance and alarm at once, presenting AI as a force of extraordinary gain and extraordinary risk.

Fourth, the central political question is no longer whether these systems are powerful, but why the public should trust the companies building them to govern their own consequences.

Who is Dario Amodei?

Dario Amodei is the chief executive and co founder of Anthropic, one of the small number of companies building frontier AI models at the highest level. Before founding Anthropic in 2021, he worked at OpenAI, where he helped lead research. He matters not only because Anthropic builds some of the most capable AI systems in the world, but because he has become one of the clearest public voices arguing that those systems will reshape labour markets, science, security, and politics on a very short timeline.

What is Anthropic?

Anthropic is a US AI company best known for building the Claude family of models. It sits among the leading frontier labs alongside OpenAI, Google DeepMind, and xAI. Anthropic presents itself as safety focused and uses an unusual governance structure, including a Public Benefit Corporation form and a Long Term Benefit Trust. In practice, it is part of the core race to build powerful general purpose models and embed them into the tools, systems, and workflows on which other organisations depend.

Why is this controversial?

The controversy is not simply that AI is improving fast. It is that a handful of private firms are gaining extraordinary influence over systems that may automate parts of white collar work, shape access to knowledge, and alter the balance between markets, governments, and citizens. Supporters argue these tools could accelerate science, medicine, and productivity. Critics argue they could concentrate power, weaken labour, deepen dependency on a small number of firms, and leave the public relying on corporate promises of safety rather than democratic oversight.

Dario Amodei is no longer speaking in our opinion like a founder selling a useful product. He is speaking like a man trying to prepare the public for a reordering.

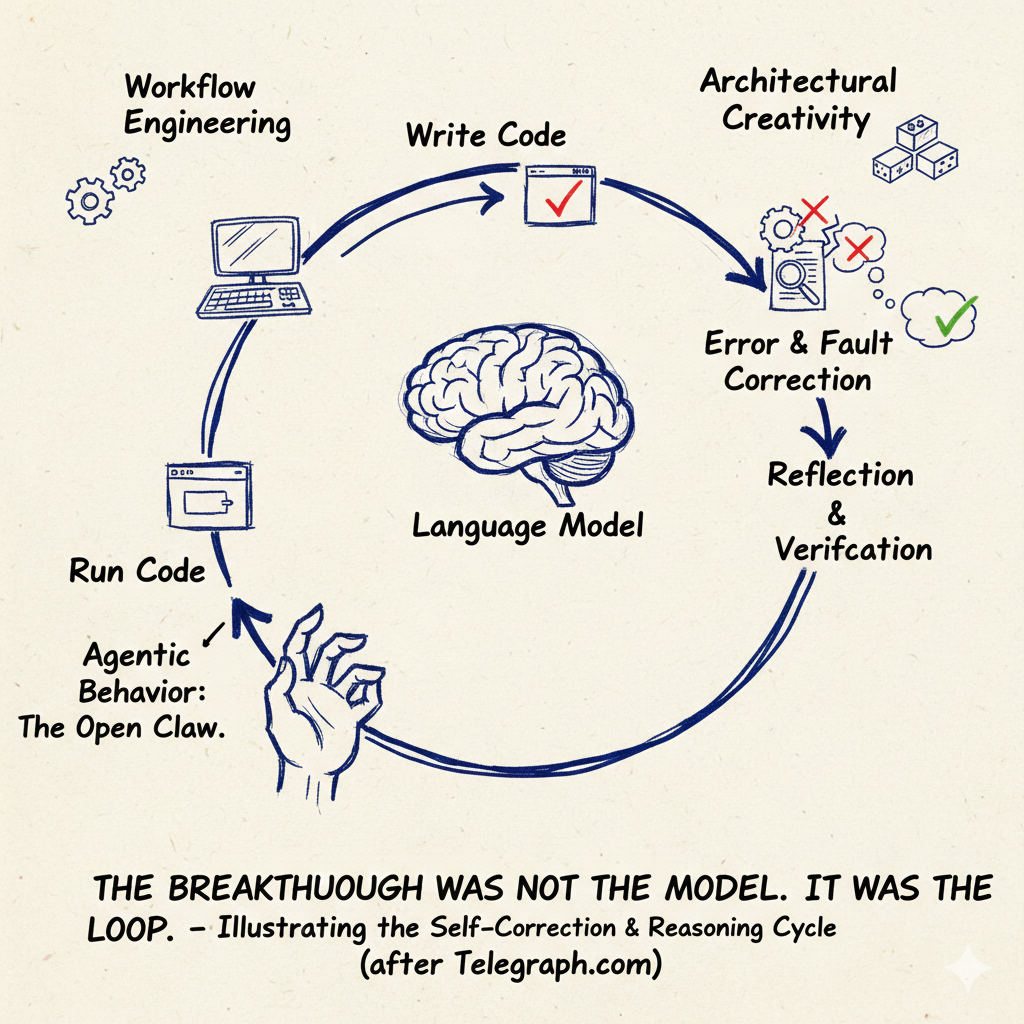

The most important thing in his recent remarks is not the line that coding is going away. That phrase is memorable, but it is not the real argument. The real argument is that coding is the first large scale cognitive workflow to become machine legible, machine executable, and increasingly machine delegated. It is the first breach, not the whole war.

That matters because coding has the right properties for early absorption. It is structured. It is digital from end to end. It is testable. It sits inside environments where output can be checked, revised, and run again. Amodei’s point is not merely that AI can help a developer move faster. It is that the actual writing of code is becoming the easiest part to hand over to the model, while the human remains in the loop for architecture, product judgment, verification, and responsibility. Even that remaining human layer, in his telling, is not stable. Coding goes first. Broader software engineering comes later. Then other forms of white collar work come into range as the tools move from assistance to delegated execution.

The mistake many of our readers make is to treat this as a labour market article about programmers. It is not. Programming is only the easiest example of a larger transition. The deeper struggle is not over apps, assistants, or chatbots. It is over who owns the reasoning layer beneath them.

Amodei is quite open about the fact that Anthropic does not need to own every product surface if it can become the underlying intelligence inside existing systems. That is why the app debate is, at bottom, a distraction. The real prize is to become the hidden operating system of work: the layer that sits inside documents, spreadsheets, code repositories, enterprise tools, internal workflows, and eventually decision making itself.

That is a larger and more durable form of power than consumer attention alone. A consumer app can be replaced. A reasoning substrate is harder to remove once institutions have built workflows around it. Strip away the jargon and the picture is simple enough. A company that controls the underlying model layer does not need to win every interface battle. It only needs to become the dependency others cannot easily replace.

That is why the frontier firms talk so much about enterprise, API usage, safety layers, and integration. They are not merely trying to sell a product. They are trying to become infrastructure. The most important AI companies are not only competing to make the best assistant. They are competing to become the mandatory cognitive intermediary between organisations and their own work.

Amodei himself is interesting because he is trying to hold two positions at once, and he is more candid about that than most. He insists there has been no conversion from optimism to pessimism, only two parallel visions he has long held in his head. One is the bright future in which powerful AI helps cure disease, accelerate science, and improve human welfare. The other is the warning that the same capabilities create grave dangers if governance, safety, and public understanding fail to keep pace.

He wants his audience to accept both claims at the same time: that AI may produce extraordinary abundance, and that the route to that abundance runs through severe systemic risk. That duality is not an incidental feature of his worldview. It is the worldview. Amodei is selling both abundance and alarm, and he thinks those positions are complementary rather than contradictory.

There is a political skill in that posture. It allows him to speak at once to capital, to policymakers, and to the risk conscious public. Investors hear frontier scale and inevitability. Governments hear responsibility and prudence. Workers hear disruption and preparation. This is not confusion. It is strategy.

The charitable reading is that someone building systems this powerful is right to think in both registers at once. The less charitable reading is that this is the ideal posture for a frontier lab chief: promise civilisational upside, acknowledge grave danger, then present your own company as the institution mature enough to manage the transition.

That is where the telegraph.com opinion and this article’s hard question sits. Why should the public trust the firms building the wave to govern its impact?

Amodei in his you tube interviews acknowledges the concentration of power problem more openly than many of his peers. Anthropic points to its Public Benefit Corporation status and its Long Term Benefit Trust as evidence that it is not just another ordinary profit maximiser. That matters. It may even be materially better than the governance arrangements of some rivals. But it does not resolve the legitimacy problem.

Why not? Because internal elite governance is not democratic control. A self designed trust structure is still a mechanism created inside the firm’s own constitutional order. It may be better than nothing. It may even be sincere. But it is still a private answer to a public power problem.

It does not answer our basic political question of why a narrow cluster of companies, investors, executives, and trustees should be trusted to set the terms of a transition that could reshape labour markets, state capacity, information systems, and security. That is the contradiction at the centre of the entire AI moment. The same firms racing to dominate the model layer also want to be seen as the responsible custodians of the world they are helping destabilise. Anthropic has not solved that contradiction. It has simply built a more sophisticated answer to it.

Labour disruption is real. Coding and computer work are where the pressure is most visible first. The infrastructure question is also real. Frontier labs are plainly trying to embed themselves beneath the modern workplace rather than merely alongside it. The governance question is real as well. Anthropic has made visible efforts to distinguish itself on safety and institutional form. But none of those facts settles whether corporate self stewardship is enough.

There is also a limit case that should be stated plainly. Amodei’s direction of travel may be right while his most compressed timelines in our opinion still prove too aggressive. Organisations do not move at the pace of demos. Adoption is uneven. Capability in principle is not the same thing as stable deployment at scale. That does not invalidate the thesis. It simply means the strongest version of the argument must remain disciplined. Coding is the first breached domain. That is already visible. The claim that most white collar work will rapidly follow is more speculative and should be treated as such.

Still, the main point survives. The coding debate is a decoy if treated too narrowly. What Amodei we think is really describing is the arrival of firms that want to become cognitive infrastructure. Their power will not rest only on apps, personalities, or subscriptions. It will rest on embedding themselves inside the routines through which institutions think, write, calculate, classify, and decide.

Once that is the frame, the argument changes. The question is no longer whether AI can write code. The question is who controls the system that mediates modern thought work, and what right they have to govern the consequences of that control.

That is why Dario Amodei matters. Not because he made a dramatic remark about coding, but because in our opinion he is one of the clearest exponents of the emerging settlement. Build the model. Integrate it everywhere. Warn that the risks are grave. Promise that your own institution is unusually responsible. Ask to be judged by your actions.

It is a coherent position. It is also one that should be met with scrutiny rather than gratitude. The wave may be real. That does not mean the wave makers get to appoint themselves as its legitimate governors.

You might also like to read on Telegraph.com

A thematic reading map of Telegraph.com AI coverage, grouped around the main fault lines shaping the field: agents, infrastructure, economics, labour, governance, and the geopolitical split.

Agents, autonomy, and the shift from chat to action

- The Age of the AI Operator: Why 2026 Marks the Shift From Chatbots to Autonomous Agents

- The Breakthrough Was Not the Model. It Was the Loop.

- The First Non Human Economy Is Being Built by AI

- The Jarvis Layer: Why the Most Dangerous AI Is Not the Smartest One, but the One Closest to You

- OpenClaw, Moltbook, and the Legal Vacuum at the Heart of Agentic AI

Compute, power, and the hard physical limits of the boom

- AI’s next moat is no longer scale alone

- The Compute Detente: Why Big Tech Is Buying Everyone and Why It Will Not Last

- AI Driven Data Centre Growth Is Colliding with Transformer Shortages and Raising the Risk of Prolonged Electricity Rationing in Britain

- Elon Musk Moves xAI Into SpaceX as Power Becomes the Binding Constraint on Artificial Intelligence

- The Cambrian Explosion of Robots Is Real and Most Will Die

Markets, business models, and who captures the gains

- AI Is Raising Productivity. Britain’s Economy Is Absorbing the Gains

- AI Is Raising Productivity. That Is Not the Same Thing as Raising Prosperity

- The Consulting Pyramid Is Breaking and McKinsey Just Admitted It

- The End of Rented Software: How Artificial Intelligence Breaks the Subscription Model

- Why Artificial Intelligence Is Breaking GDP and What Comes After

Labour, education, and the social shock

- AI Is Reordering the Labour Market Faster Than Education Can Adapt

- India’s AI Reckoning: When Intelligence Becomes Cheaper Than Labour

- Sadiq Khan Warns of Mass Unemployment. AI Poses a Deeper Threat to London

- AI Will Not Just Take Jobs. It Will Break Identities

- From Lecture Hall to Algorithm: How AI Is Rewriting Authority

Governance, safety, and the fight over control

- The AI Safety Race Has Collapsed as Companies Admit They Cannot Afford to Slow Down

- Why the Fight Over Defining AGI Is the Real AI Risk

- Why Treating AI as a Friend or Confidant Is a Dangerous Mistake and How It Can Lead, in the Worst Cases, to Suicide

- The Human Side Of Using A Very Large Machine

- The Quiet AI Revolution No One Noticed Until It Was Everywhere

China, the state, and the geopolitical split in AI

- China’s AI Governance Model vs America’s Frontier Race: Why the Real Battle Is Over Who Can Control Intelligence at Scale

- China Is Not Trying to Beat Western AI. It Is Trying to Replace the Interface

- Why AI Is Forcing Big Pharma to Turn to China

- China’s Open AI Models Could Puncture the Artificial Intelligence Bubble

- Beijing Writes the AI Rules While Washington Writes Press Releases