The New Coding: If You Are a Software Developer, This Is Your Future

AI agents are no longer assisting developers. They are executing work. The role is shifting from writing code to directing systems that generate, test, and deploy it.

Programming has stopped being mainly about writing code. It is becoming the discipline of directing an AI agent that can read a codebase, understand a task, make changes, run commands, test its own work, and return with something close to a finished result.

In our experience, this is not just a better tool. It is a different working environment. The old developer wrote instructions for the computer. The new developer writes instructions for an agent that writes instructions for the computer. That extra layer changes everything.

The mistake is to think this is only about productivity. It is not. It changes where judgment sits. It changes what skill means. It changes what risk looks like. Above all, it changes the unit of work. The unit is no longer a line of code. It is a task, a branch, a pull request, a deployment, a repair, or a whole feature.

The new stack

Instruction replaces raw coding.

Context replaces configuration.

Verification replaces trust.

The developer moves from builder to director.

Software began as explicit instruction. A developer wrote precise rules. The computer followed them. Every edge case had to be anticipated in advance.

Then came machine learning. The developer no longer wrote every rule. They gathered data, shaped objectives, trained models, and allowed behaviour to emerge statistically.

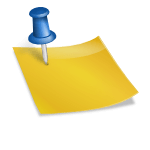

Now comes the third layer: agentic execution. The developer gives the system a goal, context, constraints, and proof requirements. The agent explores the codebase, identifies relevant files, proposes a route, edits the system, runs checks, and reports back.

This is the point where programming stops being deterministic construction and becomes orchestration.

The central paradox

The machine can do more, but the human must understand more.

That paradox is already visible in the modern pull request workflow. In the old model, a developer read an issue, opened the project, found the relevant files, wrote the code, ran tests, committed changes, and opened a pull request.

In the agentic model, the issue itself becomes the work order. A developer can assign the issue to an AI coding agent, add instructions, specify the branch, define test requirements, and let the agent prepare a pull request.

That is a structural change. The issue tracker is no longer just a management system. It becomes an execution surface.

But the human role has not disappeared. The human still has to write the issue properly. A vague issue produces vague work. A precise issue with constraints, forbidden files, testing rules, and acceptance criteria gives the agent something it can verify.

Scenario one: the issue becomes the work order

The new skill is not “write this function”.

The new skill is “define this task so clearly that an agent can complete it and prove it is complete”.

The second example is debugging. In the old model, the developer reproduced the bug, inspected logs, searched the codebase, made a hypothesis, patched the code, and tested the result.

In the new model, the developer hands the agent a structured investigation brief. The agent can read files, run commands, test hypotheses, inspect logs, identify the relevant path, and return with a patch. But it performs best when the human defines the proof standard.

Scenario two: debugging becomes an investigation brief

Here is the error.

Here is the expected behaviour.

Here is the current behaviour.

Find the relevant code path.

Do not edit until you have proved the cause.

Patch only the affected files.

Run the failing test before and after.

Report the diff and residual risk.

Without proof, the agent may produce something that looks fixed but is not fixed. That is one of the most dangerous features of the new coding. It can create coherent failure. It can produce something plausible, tidy, and wrong.

The third example is deployment. Many projects are easy to build locally and difficult to deploy. The code may work, but DNS, environment variables, secrets, permissions, build settings, ports, database migrations, and hosting rules become the real challenge.

In the old model, deployment knowledge lived in scattered documentation, dashboards, and memory. In the agentic model, the developer can turn deployment into a controlled operating instruction.

Scenario three: deployment becomes the real test

Deploy this application.

Read the project configuration.

Identify required environment variables.

Do not touch production data.

Use staging first.

Run a smoke test.

Confirm the live endpoint.

Report exactly what changed.

That is where the system is heading. The agent is not merely writing functions. It is operating across the entire software lifecycle.

But this is also where risk concentrates. If permissions are too broad or safeguards are missing, the system can cause real damage very quickly. The answer is not to avoid agents. The answer is to narrow their authority, separate staging from production, insist on backups, and require proof after each material change.

The fourth example is code review. In the old model, one developer reviewed another developer’s work. In the new model, review becomes adversarial. One agent writes the patch. Another tries to break it. A third checks tests, security, regressions, and structure. The human oversees the dispute.

Scenario four: code review becomes adversarial testing

One agent implements.

One agent attacks.

One agent simplifies.

One agent verifies.

That is not coding as typing. It is coding as command structure.

A large share of modern software exists because machines previously lacked interpretation. Installers, scripts, setup guides, dashboards, and procedural documentation often compensate for systems that cannot adapt.

In the new environment, that burden begins to shift. The agent can inspect the machine, read errors, infer missing steps, test commands, and adjust. This does not remove software. It removes unnecessary scaffolding.

The uncomfortable implication is that some complexity was never fundamental. It was a workaround.

The risk principle

AI agents are strongest where results can be checked automatically.

They are weakest where correctness depends on context, intent, or judgment.

The boundary between the two is where failure concentrates.

These systems are powerful but uneven. They can restructure large systems and still make simple mistakes. They can pass complex tests and still misunderstand obvious context. They are strongest where outcomes can be verified and weakest where correctness depends on judgment.

That is why coding is moving quickly. Code can be tested. Systems can be checked. Errors produce signals. But architecture, security, and design remain harder. A system can run and still be flawed.

That is where human responsibility remains.

The developer’s role is therefore changing. Low level detail is being absorbed. Syntax matters less. Boilerplate matters less. But structure matters more. Specification matters more. Verification matters more.

The developer defines what should exist and how it should behave. The agent fills in the details. That is not less work. It is different work, with greater consequences.

What now matters

What should be built.

Why it matters.

How to verify it.

When to trust the result.

When to reject it.

As execution becomes cheap, direction becomes scarce. The valuable skill is judgment. The best developers will not simply use AI. They will control it precisely.

Automation depends on verification. If the result can be checked, the system improves quickly. If it cannot, the human remains the bottleneck.

Understanding cannot be outsourced in the same way execution can. The system can generate answers. It cannot decide what matters. The system can build a feature. It cannot guarantee that the feature should exist.

Most systems are still built for humans. Interfaces assume human operators. Documentation assumes human readers. Workflows assume human attention.

That will change. Systems will increasingly be designed for agents to read, act on, test, and report. Developers will supervise rather than execute.

The direction of travel

Programming is becoming less about writing every instruction.

It is becoming more about shaping the conditions under which instructions are produced.

That is not just faster coding.

It is the new coding.

You might also like to read on Telegraph.com

Articles on AI

AI agents, coding and software

- AI is making execution cheap, forcing software to shift from building code to deciding what to build

- The Age of the AI Operator: Why 2026 Marks the Shift From Chatbots to Autonomous Agents

- The Breakthrough Was Not the Model. It Was the Loop.

- OpenClaw, Moltbook, and the Legal Vacuum at the Heart of Agentic AI

- The End of Rented Software: How Artificial Intelligence Breaks the Subscription Model

- The Consulting Pyramid Is Breaking and McKinsey Just Admitted It

AI power, infrastructure and compute

- AI’s next moat is no longer scale alone

- The Compute Detente: Why Big Tech Is Buying Everyone and Why It Will Not Last

- AI Driven Data Centre Growth Is Colliding with Transformer Shortages and Raising the Risk of Prolonged Electricity Rationing in Britain

- Elon Musk Moves xAI Into SpaceX as Power Becomes the Binding Constraint on Artificial Intelligence

- Why Artificial Intelligence Is Breaking GDP and What Comes After

- AI Is Raising Productivity. Britain’s Economy Is Absorbing the Gains

AI, labour and education

- AI Is Raising Productivity. That Is Not the Same Thing as Raising Prosperity

- AI Is Reordering the Labour Market Faster Than Education Can Adapt

- AI Will Not Just Take Jobs. It Will Break Identities

- AI Is Breaking the University Monopoly on Science

- From Lecture Hall to Algorithm: How AI Is Rewriting Authority

- India’s AI Reckoning: When Intelligence Becomes Cheaper Than Labour

- Sadiq Khan Warns of Mass Unemployment. AI Poses a Deeper Threat to London

AI governance, safety and geopolitics

- Anthropic’s Mythos is a warning about AI power, but not the one Silicon Valley wants you to hear

- Dario Amodei is not warning about coding alone. He is describing a fight over who will control the operating system of modern work

- The AI Safety Race Has Collapsed as Companies Admit They Cannot Afford to Slow Down

- China’s AI Governance Model vs America’s Frontier Race: Why the Real Battle Is Over Who Can Control Intelligence at Scale

- China Is Not Trying to Beat Western AI. It Is Trying to Replace the Interface

- China’s Open AI Models Could Puncture the Artificial Intelligence Bubble

- Why the Fight Over Defining AGI Is the Real AI Risk

AI, society and human risk

- The Jarvis Layer: Why the Most Dangerous AI Is Not the Smartest One, but the One Closest to You

- Why Treating AI as a Friend or Confidant Is a Dangerous Mistake and How It Can Lead, in the Worst Cases, to Suicide

- The Quiet AI Revolution No One Noticed Until It Was Everywhere

- The Cambrian Explosion of Robots Is Real and Most Will Die

- Why AI Is Forcing Big Pharma to Turn to China